Your SIEM isn't Expensive. Your Data Strategy is.

Every quarter, the same conversation plays out in boardrooms across Europe: the SIEM renewal lands on the CFO's desk, and suddenly cybersecurity is a cost problem again. But here's what nobody in that room wants to hear. The tool isn't the problem. The way you feed it is.

Machine data is growing at 25–35% year over year. Security budgets aren't. That gap doesn't close itself. And yet, most organizations respond by doing exactly what got them here: ingesting more, paying more, and hoping someone downstream figures out what's useful.

They won't. Not at this scale.

The "Dump & Pray" Anti-Pattern

Let's call it what it is. Most enterprises treat their SIEM like a digital landfill. Every log, every trace, every benign DNS query, all routed into the same high-cost indexer. No prioritization. No intent. Just volume.

We see this pattern in almost every engagement we run. The consequences are predictable:

You're paying premium prices to store haystacks, not find needles. In a recent assessment for a financial services client ingesting around 400 GB/day, we found that over 60% of indexed data consisted of pure noise: health check pings, load balancer keep-alives, debug-level application logs, DHCP lease renewals. All of it sitting in the hot tier at €2.50/GB, consuming roughly €800K per year in ingestion costs for data no analyst ever queries.

Your analysts are drowning, not investigating. When everything is "important," nothing is. The teams we work with consistently report that 70%+ of their time goes into filtering noise before they can even begin triage. That's not a people problem. That's an architecture problem.

You're locked in without knowing it. When all your data sits in a single vendor's proprietary format, switching costs become existential. We've seen organizations delay critical platform decisions by 18+ months because they couldn't extract their own data. That's not a partnership. It's a dependency.

Start with the Outcome, Not the Data

The fix isn't buying another tool. It's changing the sequence. Instead of collecting everything and reverse-engineering use cases from the pile, you start with the question: What decision does this data need to support?

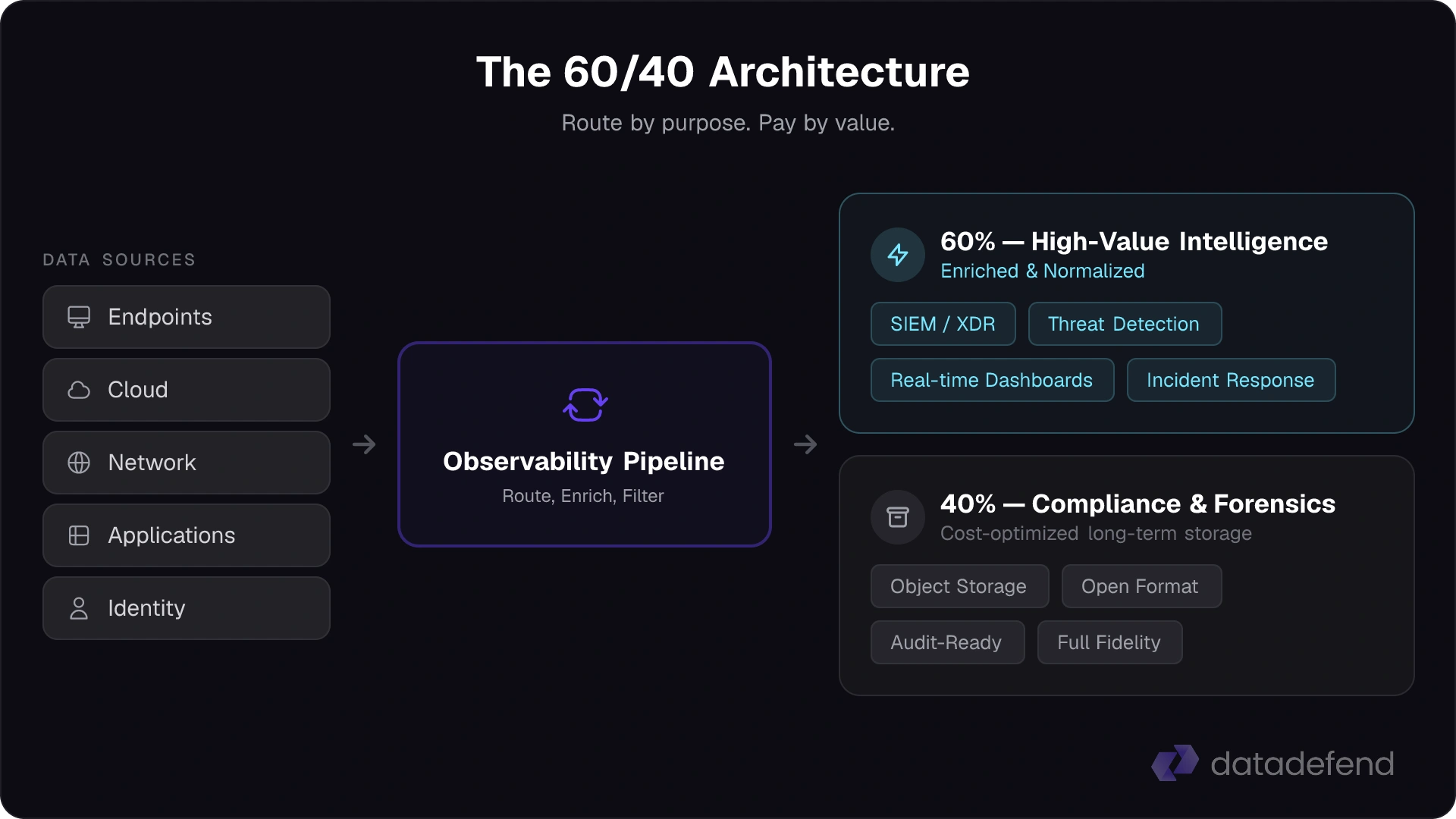

This is the shift toward what the industry calls an Observability Pipeline: an intelligent routing layer between your data sources and your destinations. Think of it as a control plane for your machine data. Before anything hits an indexer, it gets classified, enriched, and routed based on its actual purpose.

In practice, we implement this as a strategic split:

~40%: Compliance & Forensic Archive. This is "checkbox data." It's vital for auditors, regulators, and legal holds, but it has zero value for real-time detection. We route it to low-cost, open-format object storage. Full fidelity, fully searchable, but at a fraction of the ingestion cost. Think S3-compatible storage at €0.02/GB instead of €2.50/GB in your SIEM.

~60%: High-Value Intelligence. This is the data your analysts actually need: enriched, normalized, deduplicated, and delivered to your analytics engine in a format that's ready for decisions, not parsing. Threat-relevant logs. Correlated security events. Context-rich telemetry.

The result isn't just cost savings, though those are significant. It's a fundamentally different operating model. Your analysts stop being data janitors and start being investigators.

The Mindset Shift for 2026

The most important change isn't technical. It's strategic.

Stop thinking in tools. Start thinking in pipelines. When your sources are decoupled from your destinations, you gain something most enterprises have quietly lost: the freedom to choose. The freedom to route data to the best analytics engine for the job, not the one you're locked into. The freedom to control costs at the architectural level, not through painful annual negotiations.

Every euro you spend on data ingestion should be tied to an outcome. If it isn't, you're not investing in security. You're subsidizing a landfill.

Ready to Get Started?

Contact us for a free consultation and learn how we can improve your security program.